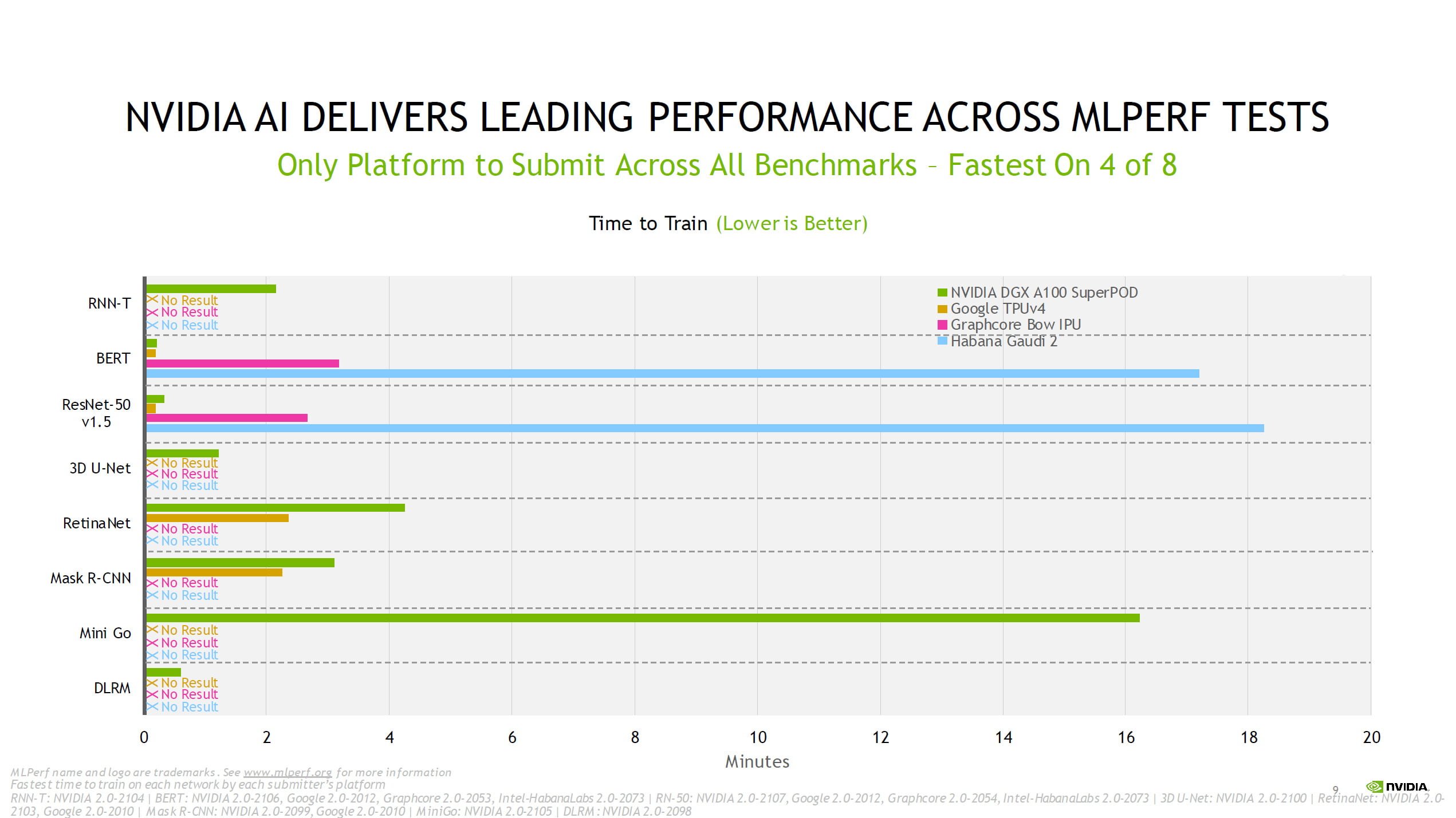

Nvidia’s chips, which were originally designed to handle the intensive computing work needed for computer graphics, are currently the market leaders for machine-learning applications. The company said that in benchmark tests, its chips-which are sold in a set of four designed to work together-performed up to 16-times faster than those from Nvidia. Graphcore, which is based in the English city of Bristol, unveiled a new computer chip Wednesday that packs a remarkable 59.4 billion transistors and almost 1,500 processing units into a single silicon wafer. in ways… Nvidia is not competetative at all and I can assure you the big customers will go where they get the best power and value for money spendt, so they could buy this 12x… and get for for 36millions of dollars worth of power for 3millions.British startup Graphcore is taking on semiconductor titan Nvidia with a new computer chip designed specifically for running cutting-edge artificial intelligence algorithms. ***The above is opinion based on idiocy and may in no way reflect reality Maybe so… Nokia said the phone they made would never be needed more. Hopefully they can nab some business from start ups, but they'll have to prove they can support their product properly before anyone will take the risk from a known quantity like Nvidia. Even if the hardware cost is low, the total cost (which includes the business growth lost as devs / users are putting resources into learning and optimising the new stuff rather than developing / growing) of changing everything surrounding those compute cards as well as the unknown that is support (Nvidia seems to support pretty well, but you'd be mad to hope for similar support from AMD who can't even code a driver properly). AMD has had awesome compute cards but Nvidia had everyone coding using CUDA and so people can't easily switch. Philehidiot QuorTek so if I read this right… this IPU undercuts Nvidia x 12 in price? But one of the big reasons Nvidia has won in compute is support and surrounding infrastructure. Early access users will be able to evaluate IPU-POD systems via Graphcore partner Cirrascale.

IPU-Machine M2000 and IPU-POD64 systems are available to pre-order immediately with full production volume shipments starting from Q4 2020. For the same performance, it asserts you would need only invest $259k in Graphcore systems, rather than >$3m in Nvidia DGX-A100 servers. The above might be academically interesting but I bet you are wondering how systems packing 7nm Graphcore Colossus Mk2 GC200 IPUs face off against the likes of Nvidia DGX A100 systems? Graphcore shares a comparison slide where EfficientNet-B4 image classification is compared. Graphcore says it features ultra-high bandwidth, low-latency communication, enabled by its own IPU-Fabric technology. Its IPU POD64 building blocks help you deploy thousands of machines for large AI/ML problems or multiple concurrent workloads.

Moving up to supercomputer scale machine learning processing and Graphcore says it has this covered too. This system puts 5,888 processor cores and 35,328 independent threads at your disposal, as well as up to 450GB of off-processor streaming exchange memory. The Graphcore IPU-Machine M2000 1U blade uses four of the GC200 IPUs in a pizza box size system to deliver 1 PetaFlop of AI compute. The new IPU surpasses the performance of its first gen chip (2018) by 8x in real-world tests. Graphcore says that its second gen IPU was built from the ground up using the Poplar SDK to accelerate machine intelligence. 8,832 separate parallel computing threads,.1,472 IPU-Cores each with and IPU core and in-processor memory,.The key specs and features of this processor can be seen in the main diagram and I have bullet pointed them below for greater clarity. Let us start by looking more closely at the processor that is central to all of today's announcements, the Graphcore Colossus Mk2 GC200 IPU. If an IPU-Machine M2000 sounds like something you would like to expand upon, Graphcore has introduced the IPU-POD which can facilitate datacenter-scale systems of up to 64,000 IPUs, delivering up to 16 ExaFlops of Machine Intelligence compute. Inside these IPU-Machine M2000 1U blade systems are four of the new Colossus MK2 GC200 IPUs built by TSMC on its state of the art 7nm process and packing 1,472 cores each and capable of "one PetaFlop of Machine Intelligence compute". Bristol-based Graphcore has just launched its second gen IPU (Intelligence Processing Unit) systems which are targeting organisations which wish to do AI processing at scale.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed